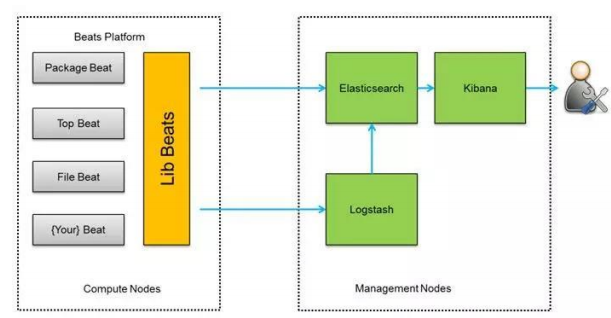

The elkx image comes with some default certificates but we're going to generate our own, this procedure differs from operating system to operating system. compose/production/elk/logstash-beats.crt and /compose/production/elk/logstash-beats.key We're going to replace the certificates that come with the image with our own, and we'll also replace the Logstash configuration for Nginx. compose/production/elk/nginx.pattern /opt/logstash/patterns/nginx compose/production/elk/nf /etc/logstash/conf.d/nfĬOPY. compose/production/elk/logstash-beats.key /etc/pki/tls/private/logstash-beats.keyĬOPY. compose/production/elk/logstash-beats.crt /etc/pki/tls/certs/logstash-beats.crtĬOPY. compose/production/elk/Dockerfile FROM sebp/elkx:622ĬOPY. It comes with X-Pack which provides some nice features, one of them is Kibana authentication. We will use the elkx:622 image, which is based on the elk one. I recommend you read their documentation,, and I found an existing ELK image which made things a lot easier, sebp /elk:622 and sebp / elkx :622. We'll start with the ELK side of things and then we'll move on the Nginx configuration. Our new Docker machine will run in a t2.small EC2 instance, we can create it with docker-machine create -d amazonec2 -amazonec2-instance-type t2.small elk-machine-name Logstash will receive, process and send our logs to Elasticsearch and we will be able to visualize them with Kibana.

#FILEBEATS NGINX ACCESS LOG INSTALL#

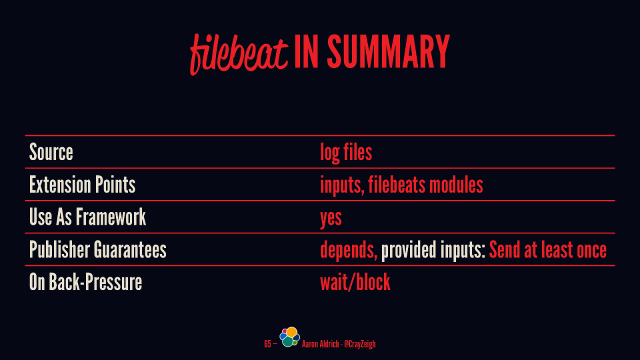

We will install Filebeat in our Nginx Docker image and let it ship the logs to our ELK stack. With this new setup, we will let our ELK stack persist the logs in another Docker machine.

#FILEBEATS NGINX ACCESS LOG UPDATE#

Right now if we deploy an update with the scripts from the last post, the Nginx logs will be lost since we created new Docker machines. Paste the following configuration into the editor.In this post, I will go over setting up an ELK stack (Elasticsearch, Logstash, and Kibana) with the setup we've been working on throughout these posts. sudo nano /etc/nginx/sites-available/stub_nf These metrics will be scrapped by the Nginx Prometheus Exporter and then by Prometheus. However, we need to configure the Nginx process to expose some metrics first. Nginx comes with a default site configuration, which is sufficient for testing. ├─1480738 nginx: master process /usr/sbin/nginx -g daemon on master_process on įeb 19 15:40:43 valhalla systemd: Starting A high performance web server and a reverse proxy server.įeb 19 15:40:43 valhalla systemd: Started A high performance web server and a reverse proxy server. Loaded: loaded (/lib/systemd/system/rvice enabled vendor preset: enabled)Īctive: active (running) since Fri 15:40:43 CET 24h ago

Our main instance of Prometheus will scrape the metrics from the exporters and write the data to a Logz.io Prometheus as a Service instance where we can use built-in metrics UI to create and view dashboards. For this, we will expose metrics and use Nginx Prometheus Exporter to collect them. The important part here is to gather the metrics from Nginx. We are going to use Nginx as a web server and serve the default Nginx static page as an application. Logz.io’s Prometheus-as-a-Service will allow us to collect and store our metrics while using visualizing them in our Metrics UI we will be able to create dashboards and graphs of that data.Īlso, logs from our system and Nginx will be transported into the Logz.io servers, so we can search and access logs, all without installing anything except the agents. This article will explore options to monitor our web application that is served by an Nginx web server using many of the Logz.io tools. SRE Revisited: SLO in the age of Microservices.Transitioning from the ELK Stack to Logz.io in 5 Quick Steps.